import os

os.environ["OPENAI_API_KEY"] = ''

os.environ["OPENAI_API_BASE"] = ''

import streamlit as st

from langchain.llms import OpenAI

from langchain.prompts import PromptTemplate

from langchain.chains import LLMChain

from langchain.document_loaders import TextLoader

from langchain.text_splitter import CharacterTextSplitter

from langchain.vectorstores import Chroma

from langchain.embeddings.openai import OpenAIEmbeddings

embeddings = OpenAIEmbeddings(

model = 'text-embedding-ada-002'

)

llm = OpenAI(

model_name = 'gpt-3.5-turbo'

)

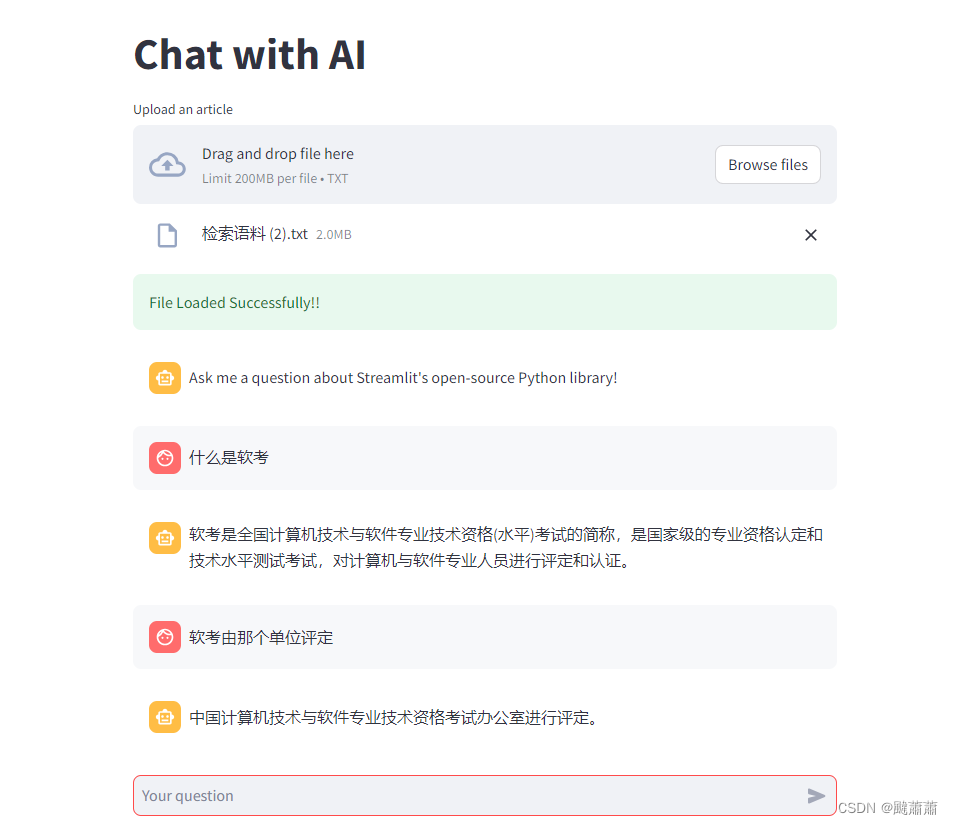

st.set_page_config(page_title="Chat", page_icon="", layout="centered", initial_sidebar_state="auto", menu_items=None)

st.title("Chat with AI")

def write_text_file(content, file_path):

try:

with open(file_path, 'w') as file:

file.write(content)

return True

except Exception as e:

print(f"Error occurred while writing the file: {e}")

return False

uploaded_file = st.file_uploader("Upload an article", type="txt")

if uploaded_file is not None:

content = uploaded_file.read().decode('utf-8')

file_path = "temp/file.txt"

write_text_file(content, file_path)

loader = TextLoader(file_path)

docs = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=100, chunk_overlap=0)

texts = text_splitter.split_documents(docs)

db = Chroma.from_documents(texts, embeddings)

st.success("File Loaded Successfully!!")

if "messages" not in st.session_state.keys():

st.session_state.messages = [

{"role": "assistant", "content": "Ask me anything!"}

]

if "chat_engine" not in st.session_state.keys():

st.session_state.chat_engine = None

if question := st.chat_input("Your question"):

st.session_state.messages.append({"role": "user", "content": question})

for message in st.session_state.messages:

with st.chat_message(message["role"]):

st.write(message["content"])

if st.session_state.messages[-1]["role"] != "assistant":

with st.chat_message("assistant"):

with st.spinner("Thinking..."):

similar_doc = db.similarity_search(question, k=1)

context = similar_doc[0].page_content

prompt_template = """

Use the following pieces of context to answer the question at the end. If you don't know the answer, just say that you don't know, don't try to make up an answer.

{context}

Question: {question}

Answer:

"""

prompt = PromptTemplate(template=prompt_template, input_variables=["context", "question"])

query_llm = LLMChain(llm=llm, prompt=prompt)

response = query_llm.run({"context": context, "question": question})

st.write(response)

message = {"role": "assistant", "content": response}

st.session_state.messages.append(message)