YARN集群模式

本文内容需要基于 Hadoop 集群搭建完成的基础上来实现

如果没有搭建,请先按上一篇:

<Linux 系统 CentOS7 上搭建 Hadoop HDFS集群详细步骤>

搭建:https://mp.weixin.qq.com/s/zPYsUexHKsdFax2XeyRdnA

配置 yarn-site.xml

vim etc/hadoop/yarn-site.xml添加内容如下:

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>node3</value>

</property>

</configuration>配置 mapred-site.xml

[zhang@node3 hadoop]$ vi mapred-site.xml添加内容如下:

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/opt/apps/hadoop-3.2.4</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=/opt/apps/hadoop-3.2.4</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=/opt/apps/hadoop-3.2.4</value>

</property>

</configuration>同步配置

在 $HADOOP_HOME/etc/下

scp -r hadoop/yarn-site.xml zhang@node2:/opt/apps/hadoop-3.2.4/etc/hadoop/也可以通过 pwd来表示远程拷贝到和当前目录相同的目录下

scp -r hadoop node2:`pwd` # 注意:这里的pwd需要使用``(键盘右上角,不是单引号),表示当前目录启动 YARN 集群

# 在主服务器(ResourceManager所在节点)上hadoop1启动集群

sbin/start-yarn.sh

# jps查看进程,如下所⽰代表启动成功

==========node1===========

[zhang@node1 hadoop]$ jps

7026 DataNode

7794 Jps

6901 NameNode

7669 NodeManager

==========node2===========

[zhang@node2 hadoop]$ jps

9171 NodeManager

8597 DataNode

8713 SecondaryNameNode

9294 Jps

==========node3===========

[zhang@node3 etc]$ start-yarn.sh

Starting resourcemanager

Starting nodemanagers

[zhang@node3 etc]$ jps

11990 ResourceManager

12119 NodeManager

12472 Jps

11487 DataNode

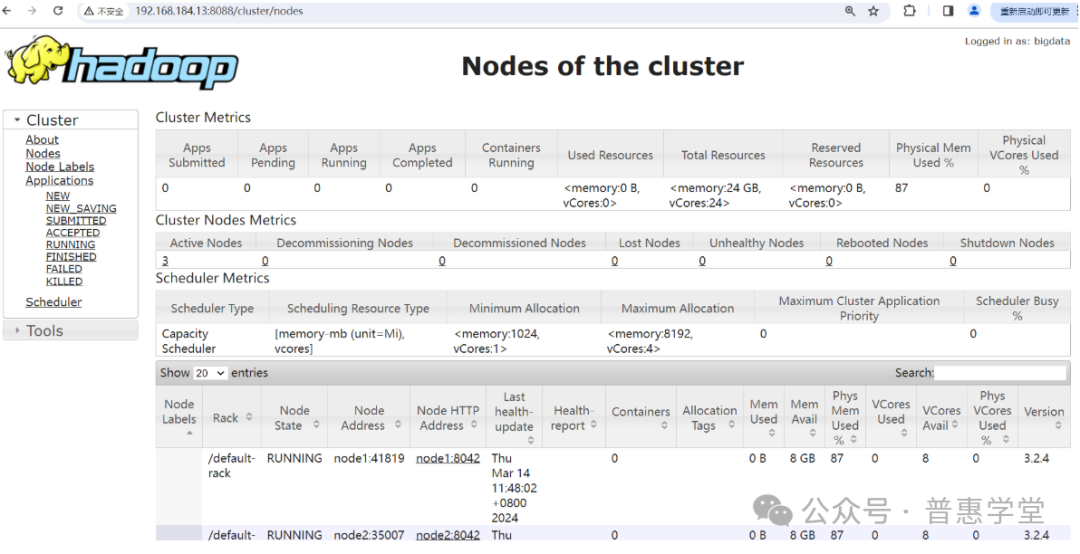

启动成功后,可以通过浏览器访问 ResourceManager 进程所在的节点 node3 来查询运行状态

截图如下:

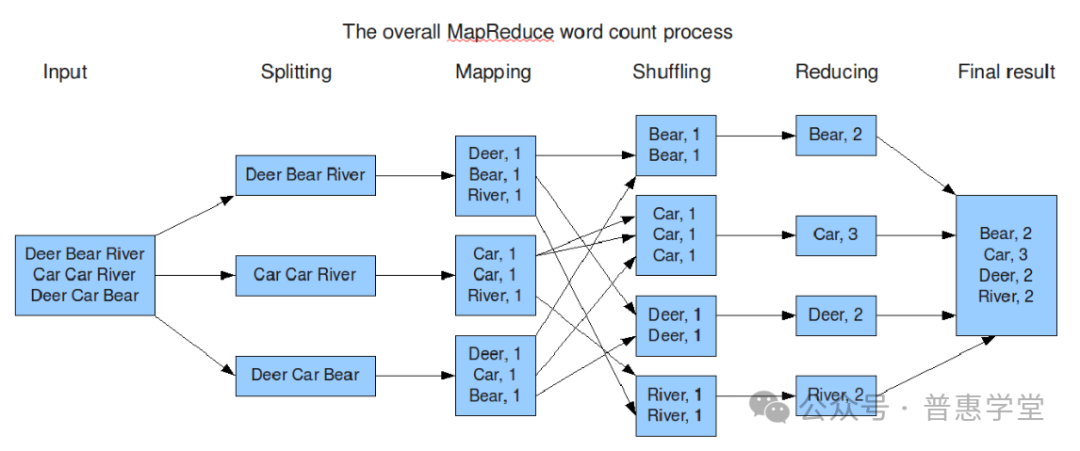

MapReduce

简介和原理

Map(映射)阶段:

-

将输入数据集划分为独立的块。

-

对每个数据块执行用户自定义的 map 函数,该函数将原始数据转换为一系列中间键值对。

-

输出的结果是中间形式的键值对集合,这些键值对会被排序并分区。

Shuffle(洗牌)和 Sort(排序)阶段:

-

在 map 阶段完成后,系统会对产生的中间键值对进行分发、排序和分区操作,确保具有相同键的值会被送到同一个 reduce 节点。

Reduce(归约)阶段:

-

每个 reduce 节点接收一组特定键的中间键值对,并执行用户自定义的 reduce 函数。

-

reduce 函数负责合并相同的键值对,并生成最终输出结果。

下面通过一张使用 MapReduce 进行单词数统计的过程图,来更直观的了解 MapReduce 工作过程和原理

MapReduce 示例程序

在搭建好 YARN 集群后,就可以测试 MapReduce 的使用了,下面通过两个案例来验证使用 MapReduce

-

单词统计

-

pi 估算

具体步骤如下:

PI 估算案例

先切换目录到 安装目录/share/hadoop/mapreduce/ 下

[zhang@node3 ~]$ cd /opt/apps/hadoop-3.2.4/share/hadoop/mapreduce/

[zhang@node3 mapreduce]$ ls

hadoop-mapreduce-client-app-3.2.4.jar hadoop-mapreduce-client-shuffle-3.2.4.jar

hadoop-mapreduce-client-common-3.2.4.jar hadoop-mapreduce-client-uploader-3.2.4.jar

hadoop-mapreduce-client-core-3.2.4.jar hadoop-mapreduce-examples-3.2.4.jar

hadoop-mapreduce-client-hs-3.2.4.jar jdiff

hadoop-mapreduce-client-hs-plugins-3.2.4.jar lib

hadoop-mapreduce-client-jobclient-3.2.4.jar lib-examples

hadoop-mapreduce-client-jobclient-3.2.4-tests.jar sources

hadoop-mapreduce-client-nativetask-3.2.4.jar

[zhang@node3 mapreduce]$

[zhang@node3 mapreduce]$ hadoop jar hadoop-mapreduce-examples-3.2.4.jar pi 3 4

Number of Maps = 3 #

Samples per Map = 4

Wrote input for Map #0

Wrote input for Map #1

Wrote input for Map #2

Starting Job

2024-03-23 17:48:56,496 INFO client.RMProxy: Connecting to ResourceManager at node3/192.168.184.13:8032

2024-03-23 17:48:57,514 INFO mapreduce.JobResourceUploader: Disabling Erasure Coding for #............省略

2024-03-23 17:48:59,194 INFO mapreduce.Job: Running job: job_1711186711795_0001

2024-03-23 17:49:10,492 INFO mapreduce.Job: Job job_1711186711795_0001 running in uber mode : false

2024-03-23 17:49:10,494 INFO mapreduce.Job: map 0% reduce 0%

2024-03-23 17:49:34,363 INFO mapreduce.Job: map 100% reduce 0%

............

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=354

File Output Format Counters

Bytes Written=97

Job Finished in 53.854 seconds

Estimated value of Pi is 3.66666666666666666667 # 计算结果

命令的含义

单词统计案例

演示步骤如下:

新建文件

新建 word.txt 文件并输入内容如下:

hello java

hello hadoop

java hello

hello zhang java具体命令如下:

[zhang@node3 opt]$ mkdir data

[zhang@node3 opt]$ cd data

[zhang@node3 data]$ ls

[zhang@node3 data]$ vim word.txt上传文件到hadoop

[zhang@node3 data]$ hdfs dfs -mkdir /input # 新建目录

[zhang@node3 data]$ hdfs dfs -ls / # 查看目录

Found 1 items

drwxr-xr-x - zhang supergroup 0 2024-03-23 16:52 /input

[zhang@node3 data]$ hdfs dfs -put word.txt /input # 上传文件到目录

[zhang@node3 data]$ 统计单词

[zhang@node3 mapreduce]$ hadoop jar hadoop-mapreduce-examples-3.2.4.jar wordcount /input /outputx

2024-03-23 18:11:55,438 INFO client.RMProxy: Connecting to ResourceManager at node3/192.168.184.13:8032

#............省略

2024-03-23 18:12:17,514 INFO mapreduce.Job: map 0% reduce 0%

2024-03-23 18:12:50,885 INFO mapreduce.Job: map 100% reduce 0%

2024-03-23 18:12:59,962 INFO mapreduce.Job: map 100% reduce 100%

2024-03-23 18:12:59,973 INFO mapreduce.Job: Job job_1711186711795_0003 completed successfully

2024-03-23 18:13:00,111 INFO mapreduce.Job: Counters: 54

File System Counters

FILE: Number of bytes read=188

FILE: Number of bytes written=1190789

FILE: Number of read operations=0

#............省略

HDFS: Number of write operations=2

HDFS: Number of bytes read erasure-coded=0

Job Counters

Launched map tasks=4

Launched reduce tasks=1

Data-local map tasks=4

Total time spent by all maps in occupied slots (ms)=125180

#............省略

Map-Reduce Framework

Map input records=13

Map output records=27

Map output bytes=270

Map output materialized bytes=206

#............省略

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=163

File Output Format Counters

Bytes Written=51

[zhang@node3 mapreduce]$

查看统计结果

[zhang@node3 mapreduce]$ hdfs dfs -ls /outputx # 查看输出目录下文件

Found 2 items

-rw-r--r-- 3 zhang supergroup 0 2024-03-23 18:12 /outputx/_SUCCESS

-rw-r--r-- 3 zhang supergroup 51 2024-03-23 18:12 /outputx/part-r-00000

[zhang@node3 mapreduce]$ hdfs dfs -cat /outputx/part-r-00000 # 查看内容

hadoop 3

hello 14

java 6

python 2

spring 1

zhang 1常见问题

错误1:

解决办法:

错误2:

2024-03-16 14:35:57,699 INFO ipc.Client: Retrying connect to server: node3/192.168.184.13:8032. Already tried 7 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1000 MILLISECONDS)原因:

错误3:

node2: ERROR: JAVA_HOME is not set and could not be found.解决办法:

${HADOOP_HOME}/etc/hadoop/hadoop_en.sh 添加

JAVA_HOME=/opt/apps/opt/apps/jdk1.8.0_281

注意:不能使用 JAVA_HOME=${JAVA_HOME}

错误4:

[zhang@node3 hadoop]$ start-dfs.sh

ERROR: JAVA_HOME /opt/apps/jdk does not exist.解决办法:

修改 /hadoop/etc/hadoop/hadoop-env.sh 文件

添加 JAVA_HOME 配置